CMG Invites You To Share Your Expertise… Journal Submissions Due in 1 Week!

May 1, 2017CMG imPACt 2017 Call for Papers – Abstract Deadline May 15th

May 4, 2017Printed in Measure IT Spring 2017.

Cloud adoption rate continues to trend upward as providers mature towards offering more robust, secure and hybrid solutions pushing more and more organizations closer to the cloud. Gartner predicts that by 2020, a corporate “no-cloud” policy will be as rare as a “no-internet” policy is today. By 2020, more compute power will have been sold by IaaS and PaaS cloud providers than sold and deployed into enterprise data centers.

Despite the many benefits of cloud (flexibility, agility, elasticity, speed of deployment, cost efficiency to name a few) and various cloud service offerings like IaaS (Infrastructure-as-a-service), PaaS (Platform-as-s-service) and SaaS (Software-as-a-service) several organizations are still reluctant to join the cloud bandwagon.

This reluctance however isn’t always resistance to change. Organizations have genuine concerns and face significant challenges throughout their cloud journey, including (but not limited to) security, privacy and compliance, availability, reliability and service quality, integration with existing infrastructure, visibility and control, or lack thereof.

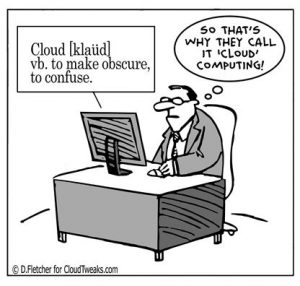

While cloud providers and their solutions are evolving to address a lot of these concerns through enhanced security controls, integration friendly hybrid options, more standardized processes and SLAs, there are still questions about unpredictability of performance and availability coupled with the lack of visibility and loss of control. Every time I think about the obscurity of cloud deployments, this comic comes to mind-

But why is visibility so important to an IT organization? Traditional data center management protocols have focused on individual technology elements like Servers, Storage, Network and Firewalls in silos. This approach has been based on the implicit assumption that if each technology element was operational, the application would be operational too. Along similar lines, traditional availability and performance monitoring paradigms have also been reflective of this assumption, focused on system resource monitoring of individual components like Web Servers, Application Servers, Middleware and Databases. Not being able to see, monitor and control system components as in a traditional data center puts the organization at the mercy of a cloud vendor and the vendor’s commitment and capability to honor and successfully meet their contractual SLAs.

And this by no means is an easy problem to solve. Why you ask? Because fundamentally, elasticity is one of the most important benefits that cloud computing provides to consumers; to effectively scale applications up and down as demand grows and shrinks. And for cloud vendors to provide and IT organizations to fully realize the flexibility and cost benefit of elasticity, servers and application instances will have to come and go all the time, thus obscuring the underlying infrastructure, thus failing the traditional paradigm of managing systems in silos.

Over the past years, this problem has created an opportunity for performance monitoring companies and given birth to CAPM – Cloud Application Performance Management.

Application Performance Management in Cloud

Before we jump into CAPM, let’s first take a moment to understand what APM means.

Simply put, application performance management is the art of Measuring, Monitoring and thus, Managing the performance, availability, and user experience of software applications. APM monitors the speed at which transactions are performed both by end-users and by the systems and infrastructure that support a software application, providing an end-to-end view of potential bottlenecks and interruptions to maintain an optimal level of service.

As organizations move newer enterprise applications to the cloud either through IaaS/PaaS/SaaS while still holding on to some legacy on the floor, the need for tools that monitor and manage the performance and availability of applications across a distributed computing environment has become a must-have. And that is what CAPM provides – capability to look at the full spectrum of application performance shifting the focus from infrastructure components to end user experience.

New generation APM tools for cloud enable IT to track end to end transaction flows across complex hybrid cloud environments as data traverses through various components in the infrastructure. This allows businesses to clearly understand and manage service dependency and delivery no matter where systems/applications are sourced thus helping regain visibility and control.

Another important factor that makes APM essential to cloud environments relates to performance unpredictability. Performance is especially unpredictable for resources like disk and network I/O where VMs contend for capacity on shared physical hardware. This contention can impact application performance in seemingly random and unreproducible ways. Good APM tools can provide the information required to detect these anomalies and take corrective action.

Another cloud must have is a service level agreement. Most enterprises have defined SLAs in contracts with their cloud service providers as a way of ensuring performance of applications. However, given the complexity of hybrid cloud environments with some applications on-premises and some in cloud, some more managed (IaaS/PaaS) than other (SaaS), ensuring end user experience simply through contractual SLAs with individual vendors does not suffice. Just monitoring end user experience is also not enough. Investing in a good APM solution that not only monitors end user experience of business transactions but also tracks response times of individual components/services that make up those transactions is critical for ensuring that your cloud providers are meeting their SLAs.

Making the right APM choice

When it comes to performance monitoring, there is no ‘one-size-fits-all’. There are multiple facets to application performance monitoring. The right approach to APM (whether through a single integrated solution or through a suite of tools) is largely dependent on the technology, type of cloud solution vs in house deployments and the flexibility your cloud vendors provide.

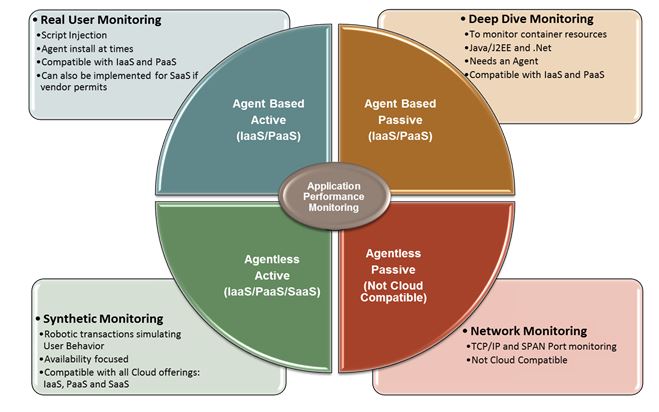

When thinking about monitoring, it helps to reflect upon Larry Dragich’s representation of the duality of APM that describes the relation between active and passive monitoring through agent based and agentless deployments. Depending upon your cloud solution, not every deployment option will be available to you. E.g. an agent based deployment on a vendor managed SaaS solution may be an overreach. Depending upon your requirements, you may have to choose APM technologies that land in one or more quadrants of that duality to get that end to end view combining the end user and service level response times with the bottom up metrics from system and application container resources.

With rising complexity of the business service stack, effective digital experience management within the more dynamic environment of cloud-based applications requires a unified, single-pane-of-glass view across hybrid environments and being able to auto discover and visualize topology mapping between end-user services and the various cloud and in-house components supporting the service. You need to be able to correlate and tie together end-user experience measurements with the bottoms-up metrics from your IT infrastructure and cloud applications to monitor and verify the performance and availability of services.

When struggling with APM vendor selection, Gartner is a good place to start. Gartner yearly report evaluates APM suites for solutions that facilitate monitoring across these three dimensions – digital experience monitoring (DEM), application discovery, tracing and diagnostics (ADTD) and application analytics (AA), all of which are critical to holistic CAPM.

There are several APM vendors in the market that have been identified as leaders in the APM space including Dynatrace, AppDynamics and New Relic, that offer complete cloud APM solutions. There are even cloud vendors that are entering the APM space, for instance this recent announcement from AWS offering a distributed tracing service (X-Ray) to help developers analyze and debug performance of distributed applications within the AWS environment.

Some other things to consider

Many monitoring solutions check for server availability. In the cloud, however, servers can come and go all the time, so alerting on server availability is not a true indication of a problem. It may however help to look at availability from a service/application standpoint using synthetic monitoring. Similarly, many of the system-level metrics that APM tools and server monitoring tools rely on are less relevant in some cloud deployments. Instead, it is best to understand performance in terms of business transactions and end user experience. Each business transaction will include hops to one or more downstream systems/components/tiers. Defining business transactions that span across all systems/components/tiers will help you understand how every individual systems performance affects end users. This can be achieved through both real user as well as synthetic user monitoring as long as the definition of a synthetic transaction complete i.e. spans across all sub-tiers.

While it is important to know when a business transaction is behaving abnormally, it is equally important to detect performance anomalies at the tier level. If the response time of a business transaction, as a whole, is slow by one standard deviation (which is acceptable) but one of its tiers is slower by a factor of three standard deviations, you may have a problem developing, even though it hasn’t affected your end users yet. Chances are the tier’s problem will evolve into a systemic problem that causes multiple business transactions to suffer.

Irrespective of which APM solution you deploy, whether you choose to monitor real users or create synthetic transactions, whether you choose to deploy agents in your infrastructure or inject a JavaScript or use metadata tags or go agentless or maybe combine multiple solutions to make your own, the only way IT organizations can keep up with the ever changing complexity of cloud infrastructures is by moving away from traditional, ‘in-silos’ data center paradigms towards a holistic approach to application performance management for mission critical applications.

Post by Priyanka Arora ([email protected])

References

- https://cloudtweaks.com/2012/10/the-lighter-side-of-the-cloud-obscurity/

- http://www.gartner.com/newsroom/id/3354117

- https://www.gartner.com/doc/reprints?id=1-3M8KIVD&ct=161118&st=sb

- www.linkedin.com/pulse/20140721113109-174117200-the-active-passive-monitoring-duality-of-apm

Disclaimer: The views and opinions presented in this article are my own and do not represent/express the views or opinions of my employer MUFG Union Bank N.A.